Google recently held its Search On live event, and there was some news about the company’s core product – namely, the search engine – announce.

It turns out that Google has big plans for the further development of its reliable search tool, and the next step in the evolution is to make visual search more advanced with the help of artificial intelligence.

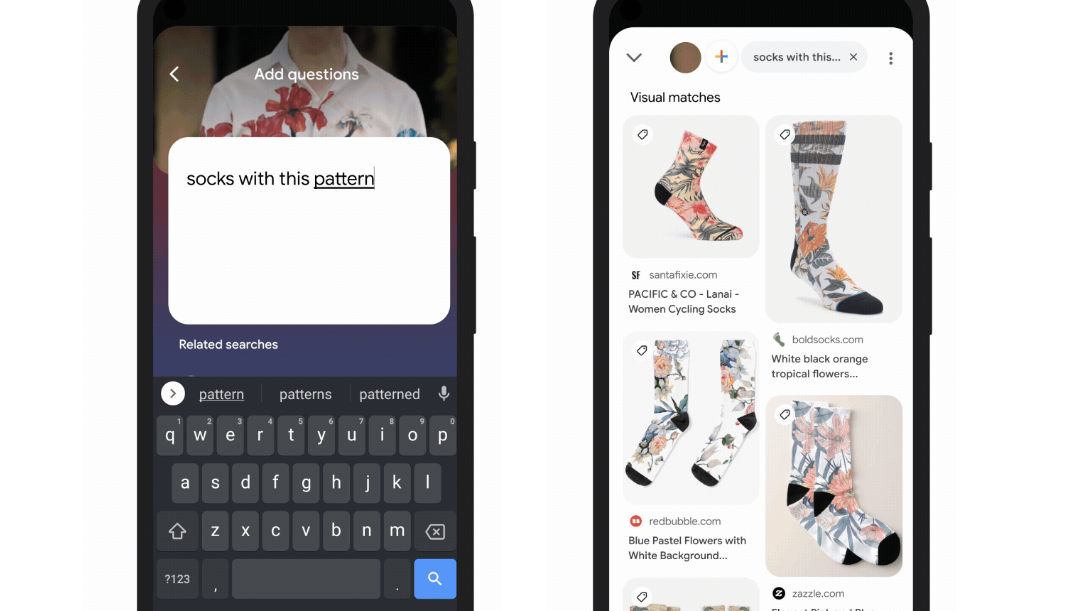

Allows you to ask questions about photos

In the coming months, the company will make it possible to conduct searches by asking questions about what is in the images.

For example, if you take a picture of a dress with a certain style that you feel, you can look for other types of clothes in the same style. This way, you don’t have to describe what you see in just words, which is a very demanding task, as Google points out.

As you know, visual searches are already possible with the help of the Google Lens tool, which makes it possible to perform searches on Google based on the images taken with the mobile phone camera. New here, however, is the opportunity to actually ask questions about what is shown in the photo.

The new function is integrated into the lens function and is activated by clicking on the lens icon while the current image is displayed on the screen.

Another example of the application is repairs. Instead of searching for the relevant parts that need fixing, you can use the function to simply take a picture of the damaged part and ask Google how to fix it – which takes the user directly to relevant DIY videos or the like.

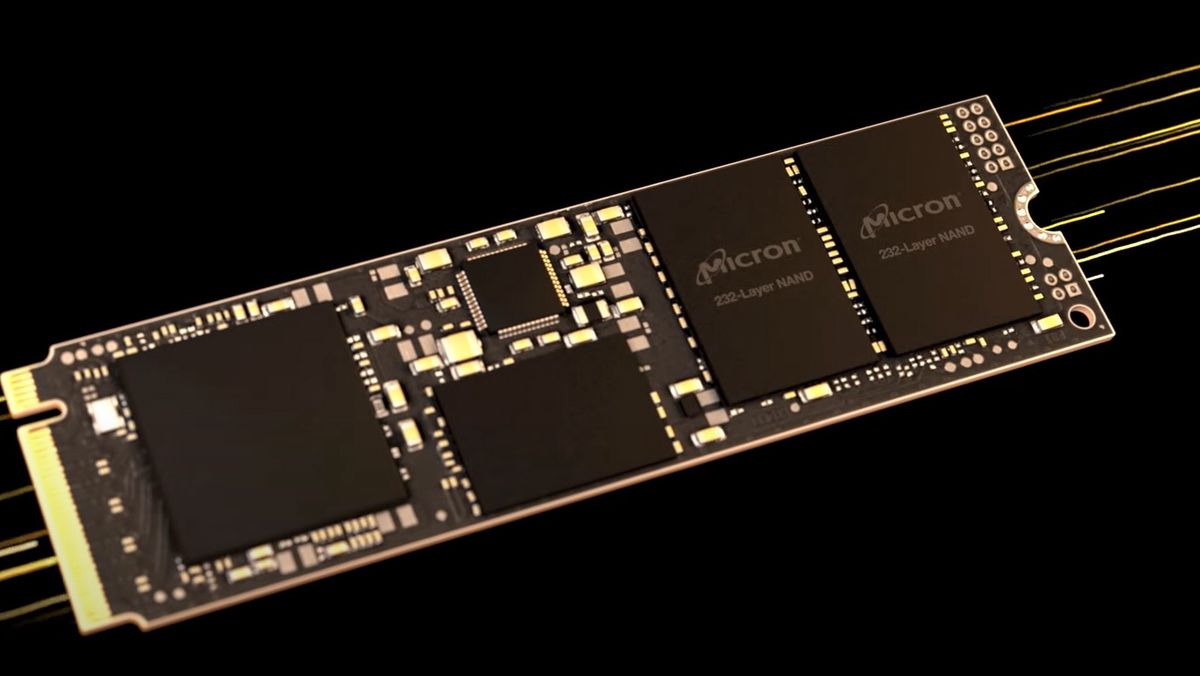

“The Unified Multitasking Model”

The new technology is based on something Google calls Multitasking Unified Model (MUM), which the company presented at the I/O conference earlier this year. MUM is touted as a milestone in the work of using artificial intelligence to understand information in a much more advanced way than before, and to perform more complex tasks using Google’s search technology.

MUM technology will also be integrated directly into the search engine in the form of a new feature that Google calls “Things to Know” – a kind of menu that gives users different types of additional information about what they’re looking for, based on how users explore topics. Thus, the intention is for one to get quick access to the most relevant and useful information first.

This functionality will also be coming in the next few months, with no specific time available.

Not least, MUM will also have an impact on videos. With this technology, Google will now be able to identify topics related to what is shown in the videos, in order to provide the user with relevant links. According to Google, MUM will even be able to serve user-related topics that are not necessarily explicitly mentioned in the videos, based on a deeper understanding of the content.

More information about Google News can be found in the company Official Blog.

“Web specialist. Lifelong zombie maven. Coffee ninja. Hipster-friendly analyst.”